This article is a little esoteric so one may want to skip it unless one is interested in the underlying mechanisms that cause quantization error as photographic signal and noise approach the darkest levels of acceptable dynamic range in our digital cameras: one least significant bit in the raw data. We will use our simplified camera model and deal with Poissonian Signal and Gaussian Read Noise separately – then attempt to bring them together.

Recall that Signal is defined as the mean of the output of a number of pixels and Noise is their relative standard deviation. In order to fully encode visual information present at the output of a sensor’s active pixels we therefore need to be able to accurately store in the raw data both signal and noise, since standard deviation affects and is affected by the signal.

It’s easy to imagine that as long as the sample size is large enough (say 20,000 pixels) and the signal in e- large enough relative to a given gain we could typically extract an accurate mean signal and standard deviation measurement at the desired number of decimals, say 1%, after the ADC in units of DN, as discussed earlier. Here for instance is a simulated measured mean signal of 52.5e- with 1 LSB equal to 5e- ( gain of 0.2 DN/e-, say a Nikon D810 at base ISO) with no read noise, over a 200×200 pixel sample:

Each bar of the blue histogram corresponds to a discrete e- count, according to a Poisson distribution. The mean and standard deviation derived from it represent the natural signal and are therefore always ‘correct’. They are the benchmarks against which we measure quantization error in the raw data after the ADC.

An ideal signal without noise and with a gain of exactly 1 DN/e- should in theory never show quantization errors: it will simply reproduce in DN the natural signal in e-. The output histogram in DN will look the same as the input blue histogram in e-. However, if the gain differs from exactly 1 DN/e- the shape of the histogram of the raw data produced by the analog to digital converter will start to look different than the input’s, creating an error in the reading of mean and standard deviation: quantization error.

The red bars in the previous figure represent the relative count after the ADC in raw data numbers at a gain corresponding to 5e-/DN. They are the normalized integral of the blue bins within the span of a digit (DN), the result of analog to digital conversion.

Quantization Error = Information in DN less Accurate than Information in e-

Quantization error manifests itself by showing a less accurate reading of mean and standard deviation in DN compared to e-. We can see from the graph above that any errors caused by the coarser quantization of DN versus e- is minimal at that gain and signal level – and partly compensated for in different parts of the histogram.

The theoretical mean is 52.5e-*0.2DN/e- = 10.5DN. A simulation produces a measured mean of 52.47e- or 10.49 DN. Both theoretical and measured means are within a percentage point or so of each other, indicating that there is enough information in the data at this signal level to properly encode mean information into the raw data.

The standard deviation is determined by computing the square root of the distance of each e- or DN bin from the mean, squared and weighted by the count. In this example the theoretical standard deviation is sqrt(52.5) = 7.25e- or 1.45DN. The measured values from the simulation are 7.26e- and 1.48DN, again both within a percentage point or two. Quantization is clearly not an issue at these signal and gain levels.

However as the signal and noise approach and dip below 1 LSB (the last stop of bit depth) quantization errors become more relevant because the size of the standard deviation due to shot noise compared to 1 bit becomes too small to properly spread (dither) the information into neighboring raw levels. The histogram becomes too ‘blocky’ to properly represent the underlying photoelectron distribution, introducing errors in the raw data (DN):

The shape of a poisson distribution, easily discerned in the blue e- histogram, is lost in the three-bar red DN histogram.

With these conditions the theoretical mean is nevertheless still very close at 5.2*0.2=1.04DN with the measured mean 5.20e- or 1.05DN

However, the theoretical standard deviation should be sqrt(5.2)=2.28e- or 0.456DN but – significantly – turns out to be a larger than expected 0.522DN after the simulated ‘ADC’, an error of over 14%. So at these low signal levels and with this gain it appears that the mean is still accurately stored in the raw data but the standard deviation no longer is.

This makes some intuitive sense because with an infinite sample we should always be able to get an accurate mean. On the other hand the same is not true for standard deviation.

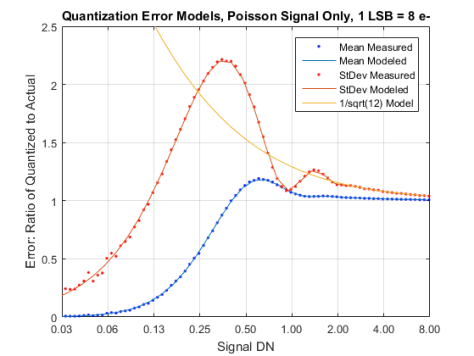

Signal Only: Quantization Error

To understand why let’s take a closer look at the quantization error of both signal and standard deviation when the signal approaches one LSB in the absence of random read noise. The following graph shows the error due to quantization expressed as a ratio of the measured values from the simulation to the theoretical ideal (‘Actual’ in the label) for both mean and standard deviation when the gain is 0.125DN/e-. 8e- is therefore 1 LSB/bit/DN, 16e- is 2 DN, 4e- is half a DN and so on.

Signal Only: Sub-Bit Mean

You can see that down to a signal of 8e- or 1 DN the error in the mean is fairly contained, while below that it degenerates quickly.

Below a mean signal of 0.3DN there isn’t enough shot noise in the system to dither visual information to neighboring raw levels. Eventually the mean becomes a form of quantum mechanical aliasing: most pixel counts get recorded as zero and the proportion of zeros to other levels is no longer representative of signal. Depending on how finicky one is it looks like one-to-one correspondence with mean photoelectron information in these conditions starts degenerating quickly when signal level in the raw data dips below 1 DN.

Signal Only: Sub-Bit Standard Deviation

The standard deviation due to shot noise tells instead a different story, losing control from well above that at this gain.

The corresponding red and blue solid lines in the graph represent the modeled mean and standard deviation calculated from first principles, which show good agreement with the results measured in the simulation. The modeled mean is obtained by summing the e- in each DN bin and computing their weighted average; the modeled standard deviation is calculated from first principles from that mean and bin information. The Octave/Matlab code used to accomplish this can be found here.

The solid yellow line is on the other hand theoretical shot noise (the square root of the signal) added in quadrature with theoretical quantization noise from a uniformly distributed quantization error (1/√12). Below about 1.2 DN standard deviation detaches from it and and we have to rely on the first principles derivation.

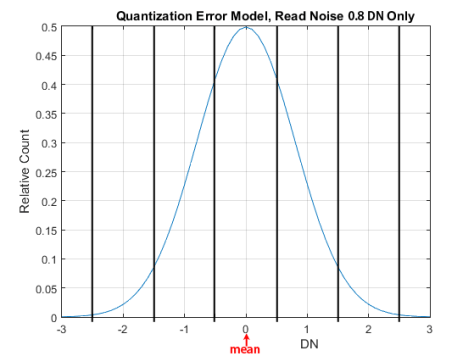

Random Read Noise Only: Quantization Error

A similar procedure can be used to understand how quantization error affects random read noise in the absence of a Signal. The main difference is that the mean of random noise is zero and the standard deviation has a Normal or Gaussian distribution which is symmetrical around zero. Recall that we model random noise as continuous so that the shape of its histogram is in theory independent of gain and always a continuous line representing a Normal distribution . For Read Noise of 0.80DN standard deviation this is what it would look like:

Since the mean is zero and the shape of the histogram is symmetrical, it’s easy to calculate sub-bit quantized read noise standard deviation from first principles simply from the values that fall within the first couple of DNs. Running the simulation and the model results in the following Quantization Error plot for read noise only as its standard deviation approaches 1 LSB and beyond:

The orange curve shows that the first principles model tracks very well the measured standard deviations from the simulation throughout the shown range. The Octave/Matlab code used to accomplish this can be found here.

The blue line on the other hand represents the simpler model of quadrature addition of read noise and standard quantization noise (1/√12). The simpler model works well with read noise down to about 1/2LSB, but stops being representative of measured standard deviation below that.

Adding Sub-Bit Read and Shot noise Together

Now that we have a good understanding of how both sub LSB read and shot noise behave one would think that we could use the usual trick of adding them in quadrature to obtain total sub LSB noise. Unfortunately that’s not possible because quadrature addition of random variables produces accurate results only if they are uncorrelated. In this case however they are correlated through the size of the LSB and the signal. Dr. Joofa has a brilliant explanation of how in this DPR post, and I will let the interested reader pursue it from there.

Fortunately we can move forward even without fully understanding correlated quantization noise because the exercises above have shown the accuracy of the simulation at sub-LSB levels – as seen in the agreement in the pictures above between ‘measured’ and ‘modeled’ values using first principles.

Sub-Bit Total Noise

Let’s therefore simulate what happens to total read and shot noise (total standard deviation) when the signal approaches 1 DN at a fairly aggressive gain of 0.1 DN/e-, typical of the Sony a7S at base ISO for instance. The dotted curves are measurements from a simulation with the given signal, gain and read noise – effectively the bottom part of a Photon Transfer Curve.

The solid lines represent the theoretical addition of shot and read noise in quadrature without taking quantization into account. One can effectively read the read noise standard deviation off the y-axis intercept where signal approaches zero:

However, note the characteristic undulations induced by quantization up to a read noise of 0.4DN. Above that the quantization error appears to be swamped by read noise and can be accounted for by the simple rounding error model, with zero mean and 1/√12 LSB standard deviation rms. Below is the same data with the solid curves this time representing shot and read noise added in quadrature with 1/√12 LSB quantization error. The y-axis intercepts of the solid curves here are therefore sqrt(RN^2+1/12):

The model of combined shot, read and quantization noise is seen to predict signal and total noise virtually perfectly with read noise as low as 0.6 LSB in these conditions – but somewhere around 0.5 LSB it starts falling apart.

Here is a similar plot with a gain of 0.4DN/e-, typical of cameras like the Nikon D7200 around base ISO, with read noise less than one DN. Again it looks like above 0.4LSB read noise quantization error ‘ringing’ is quenched as seen earlier and the simulation behaves according to the theoretical quadrature sum of read, shot and 1/√12 LSB quantization noise:

For completeness we can show plots of measured mean signal versus signal-to-noise ratio. The error is not as apparent in this plot because the y-axis has a much larger log2 scale. This is how it would look with a gain of 0.1DN/e- on a Photon Transfer Curve. The solid lines represent the quadrature sum of theoretical read, shot and 1/√12 LSB quantization noise:

So it appears that above about 0.5DN read noise standard deviation there is no perceived correlated noise and quantization error in sub-LSB mean signals is well explained by the 1/√12 LSB rms model, which for all intents and purposes can simply be considered an additional component of random read noise, swelling it a bit.

Interestingly, 0.5DN read noise also appears to be the threshold above which posterization of a smooth gradient is no longer visible, as Jim Kasson shows in this excellent demonstration.

Jack, I just measured a black frame of Olympus E-M1II at ISO 250

the stddevs measured are

ISO250 R=0.776 G1=0.682 B=0.728 G2=0.768

I read that you use the first principles model for actual values < 0.65 but .. for quick results .. can I assume that for the lowest value 0.682 the simple formula works with a negligible error ?

RN = sqrt( 0.682*0.682 – 1/12) = 0.618 ?

Sure Ilias. Weird that large difference between G1 and G2. If that G1 is correct it looks like the E-MII could have used a 14-bit ADC. How large of a sample area did you use? Try to keep it to 400×400 (200×200 in each channel). Also keep in mind that most people do not factor out the 1/12 variance so the values you typically see around for RN include it.

Jack

Thanks Jack,

The sampling area was large. The difference G1 vs G2 was more or less consistent in all areas and all ISOs ..

The histograms (all of them not only the G1) were deviating strongly from gaussian ..

.. but the samples came from a preproduction body so I don’t know if this is valid for production bodys ..